Improving Drupal performance by 80%

Drupal is a bit famous for the number of database queries done against a database. When there are thousands of concurrent users to be served, the database can quickly turn into a major bottleneck. This was the case with http://kwestiasmaku.com - a very popular website with recipes. The website is visited by millions of enthusiasts of cooking.

When we started our cooperation with KwestiaSmaku, we initially focused on adding new features and functionalities to the website. Eventually, though growing popularity forced us to look at the application from a performance point of view.

Our Drupal developers have done many performance optimisations of various types and below is just an example or what we often achieve.

Analysis

To tackle the performance bottlenecks we first wanted to understand what causes the greatest load. Usually, there are two possibilities:

- Websites code is inefficient (it can contain obvious errors which cause additional unneeded database queries, sometimes repeated in loops and iterations).

- There may be no direct errors. You can tick all the boxes of building the website the Drupal way following all the best practices but with certain user volume still hit a bottleneck (usually database related).

We did not build KwestiaSmaku but took over its maintenance, so firstly we wanted to make sure that custom modules did not contain any surprises that would fit in option number one above. We used x-debug profiler and blackfire for this. These tools are very similar in many aspects but also each gives some unique options. Both allow you to observe how the page is generated and how much time is spent at each function in the call stack.

One thing we found with the tools was browsercap module, which was installed but not used for anything. It did, however, query the database substantially and for each page request. Apart from this, we found some sub-optimal things we could fix but nothing so major enough to get us out of the database bottleneck. We had to move to phase 2 - performance optimization.

One of the best tools helping you understand what happens with your server in New Relic. Data gathered by it confirmed what we suspected from the beginning- the heaviest and most time-consuming queries were the ones generated by the views module. KwestiaSmaku used fairly complex views to display lists of recipes on the front page and in categories. Views module is great but it is a two-edged sword - you can quickly create complex lists of entries but you get big and heavy database queries with multiple JOINS and nested sub-queries.

Search API Solr

Apart from setting a time-based cache or creating a slave database and using it to feed the view, there was not much we could do to optimize database queries built by views. To work around this we proposed moving the whole view to from a database query to an index based on Apache Solr. We already used Solr to power the website's search window so the only thing we needed to do was to create another index that would hold data we needed to display on the lists of recipes.

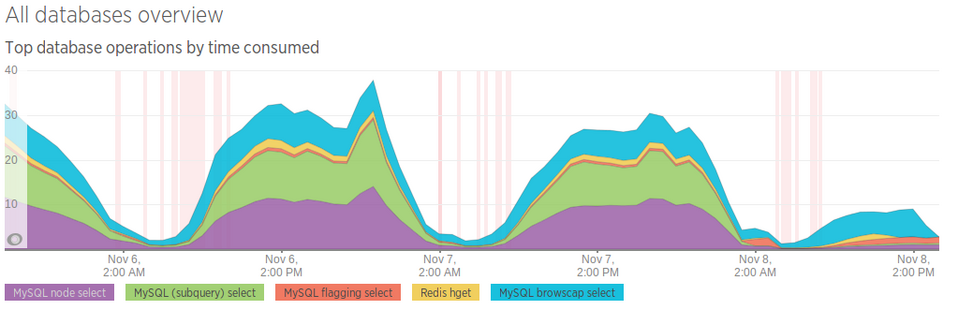

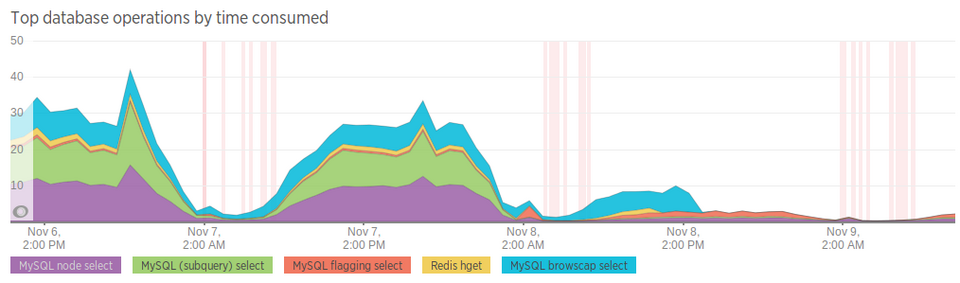

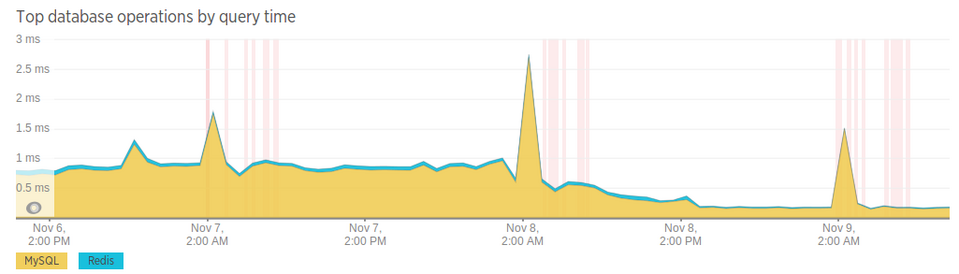

With Solr we removed the heavy database queries, substantially decreasing the load on the database. This is clearly visible on New Relic graphs from that period. We released the change on the night from 7th to 8th November and the following day the database was already under much less stress.

Next, on 8th November we disabled the browsercap module which was still executing an inefficient query (the blue color on the graph)

Thanks to the change from database to Apache Solr index we completely eliminated the most inefficient and time-consuming database queries. The longest queries are now 5 times shorter than before the change (the sharp ticks on the graphs are nightly backups)

Thanks to our optimizations, kwestiasmaku.com can grow further without the need for the server change or implementation of complex server infrastructure.

If you have a website which has performance issues, give us a shout. As part of providing drupal development services, we often improve website performance.