Automated Tests on Multiple Browsers

Tests on multiple browsers are a must nowadays, but is it worth to automate these tests? We should definitely consider this. The following article may help you make that decision. We will show you how to minimise the disadvantages and how to use the advantages of this solution. You will learn how to set up such types of tests in Codeception and where to get the browsers you will want to use in the tests.

Cross-browser testing

Cross-browser testing (i.e. testing on various browsers) is nowadays one of the most important aspects of creating any web page or web application. Any responsible developer must consider the compatibility of their page with the browsers used by the users. Although the discrepancies between browsers are much smaller than before, we can still quite often encounter a case of different interpretations of JavaScript code or that a given version of the browser will not support a specific fragment of our CSS. We must also bear in mind that our users will not always use the latest version of a given browser. That is why it will be so important to check several versions of a given browser.

All these traffic analyses on our page can lead us to build a really large list of browsers and devices that we should test in order to make sure that we provide our users with a page of the highest quality Drupal services. A question arises here: if I will always do the same thing on all devices and expect the same effect, is it not a good idea for automated tests to help me with this? I believe that this is something worth considering. It is also good to calculate the costs and benefits of such a solution.

All the examples described below will be based on Codeception, but there is nothing keeping you from transferring these solutions into your technology. If you do not know what is Codeception and how to set it up in your project yet, be sure to read about how to automate tests in docker console.

Environments

In this article, we are starting from the point where we have a working project with one browser set up.

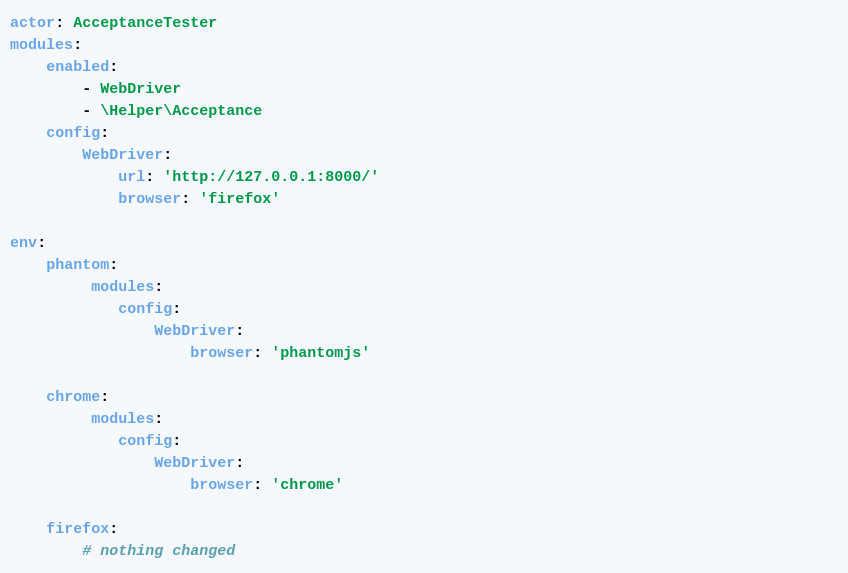

Codeception predicted that users would want to run tests in different configurations, so in our configuration file (for example acceptance.suite.yml) we have the option to overwrite the default settings. An example can be the desire to use several different browsers, as in the picture below:

In the "env" section you can define settings under any name (phantom, chrome, firefox here) that override the default configuration declared earlier.

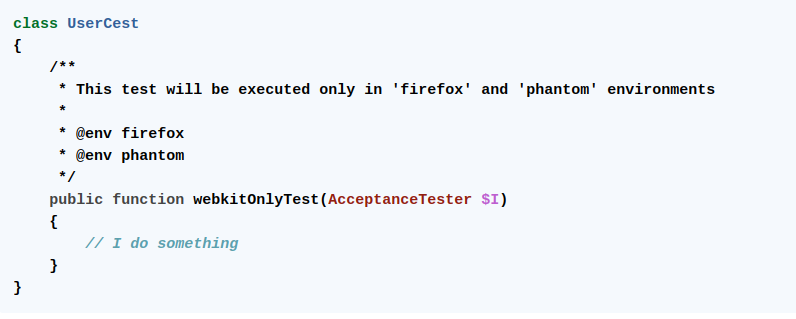

When the general configuration of the test is already behind us, we can go to specific files with tests. In this case, we can assign the appropriate annotation – as in the example below – and decide which tests are to be run in a given environment.

Now we can easily switch between the configurations and choose which tests are to be executed. In order to do this, all we need to do is run our tests, adding the --env parameter with the name of the specific environment to the command that executes it. Based on the previous article, it will be, e.g.:

dcon ‘test --env chrome’

When we want to run tests using the Chrome browser. Quotation marks have been added to make the docker console treat the env parameter as part of the test command, and not a separate parameter.)

We can also perform tests on several environments by executing the command:

dcon ‘test --env chrome --env phantom --env firefox’

or combine the settings with the command:

dcon ‘test --env chrome,mobile --env phantom,desktop --env firefox,desktop’

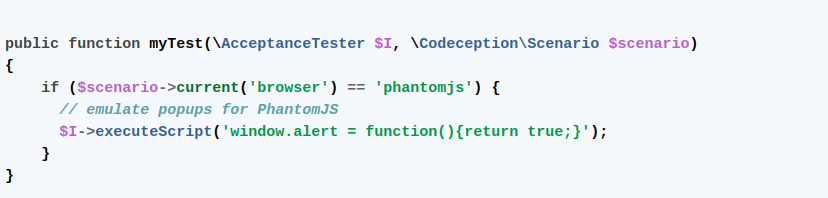

It is also worth mentioning that we can refer to the currently set environment in the test in this way:

This gives us the ability to perform specific actions depending on the browser setting, and thus – handle any exceptions resulting from differences that we know about and that are acceptable.

Docker images for Selenium

Since we already know how to set it to different environments, it is worth to ask ourselves the following questions:

- Do I need to have all browsers on my computer?

- How can you easily decide which version will be tested now?

Here, for example, docker images provided by Selenium come to our aid. Currently, they make it possible to build a container with a selected version of the Chrome, Firefox, or Opera browser ready to run tests on it. This is really a good solution because changing the browser version only requires us to change the number in the image tag (we can also build and define more than one version).

Additionally, we can use the hub to build a whole database of different versions of browsers that we will have available for testing. I recommend experimenting with creating your own structures, preferably using the docker-compose file. The project is well documented and being continuously developed. Recently, it became possible to record your tests. For those creating small projects, it can be important to know that all these images are completely free to access and use. The biggest downside is the limited number of the browsers available.

BrowserStack

In the case, if we want to have access to more browsers or devices, various platforms that provide the ability to connect to a given configuration (device/operating system/browser with a choice of different versions) are a good alternative. At Droptica, we decided to use BrowserStack. The advantage of this solution is the easy integration with Codeception and our extensive knowledge of the tool that we have already used for manual tests.

Thanks to the use of this technique, we often have access to hundreds or even several thousand different system configurations. The added value is also the access to the administration panel, where we can view the current and archived results of our tests and the recordings of their progress. Choosing this option, however, will require us to include the expenditure in the form of access to such a platform in the project budget.

Advantages and disadvantages of automated tests on different browsers

An undoubted advantage of using automated tests on various browsers is providing the QA department with a high-quality tool that significantly supports their work, and thus – should contribute to improving the overall quality of the final product.

It will certainly shorten the time that the team of testers has to spend on carrying out manual tests. If we will not automatically check different browser combinations, our team will have to deal with it.

As for the disadvantages – first of all, it takes time to prepare and maintain such a solution. Let me remind you that we are talking about the time spent solely on adding the possibility of testing on several browsers. The time spent on writing and preparing the tests is another issue. However, while writing, we may come across a case of browser differences that also need to be handled.

Another disadvantage will be the time needed to perform the automated tests, which will be longer when adding another browser. However, it should be kept in mind that in this case, the alternative is to perform these tests manually, on which we also need to spend time.

After considering the advantages and disadvantages, we can decide whether it will be profitable for our project to run automated tests on several browsers, but before we get there, let us try to tip the scales towards testing.

Parallel Execution, or how to reduce the disadvantages

It seems quite obvious that if we want to run our tests on more than one browser, their execution time will increase, but does it really have to?

This is where Parallel Execution, which is performing several tests simultaneously, can help us. Thanks to the use of docker images and the appropriate configuration of the project, we can significantly reduce the time needed to perform tests. Now, the time needed for performing our tests does not have to increase so drastically every time we want to add to them another browser or its different version.

Visual tests, or how to use the advantages

The most common aspect, which will differ depending on the browser, will be the visual aspect, and it is on it that we often put the greatest emphasis during such tests. However, it is also the type of tests where it is easy to get stuck in a rut and skip a detail. Therefore, it seems like a good idea to use a machine to help with these tests.

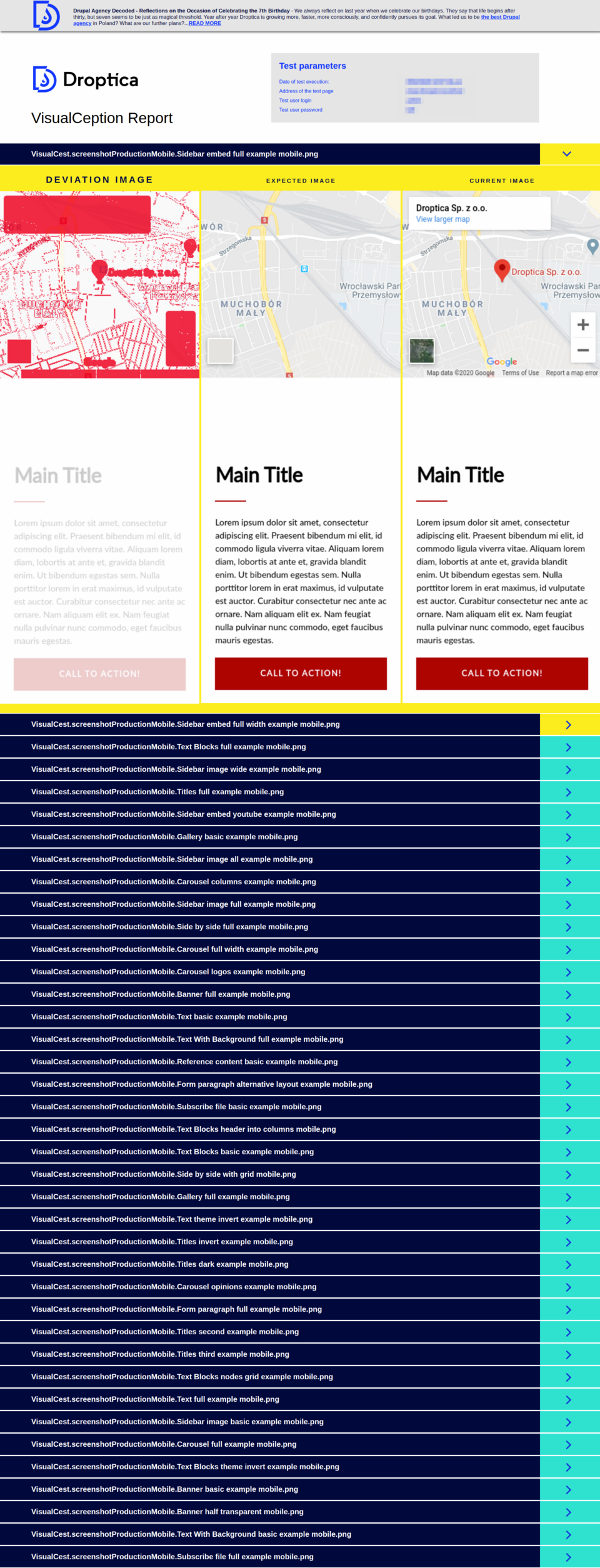

As I described in one of the previous articles, at Droptica we use Visualception for automated visual tests. The same goes for multiple browsers because nothing changes in the Visualception's setting itself. We have a separate report for each environment (similar to the one shown below).

Reviewing such a report is certainly much easier and more pleasant than comparing the differences by oneself, where – despite keeping a vigilant eye – it is possible to overlook the minimal changes that the automated process will detect and highlight. An interesting case is a test that helped me detect a minimal reduction in the quality of images on a page, which would normally escape my attention.

Conclusions

Whether tests will be needed on your project is not obvious, it needs to be balanced and sometimes analyzed within Drupal consulting to see if the benefits exceed the costs that must be incurred.

Parallel Execution will help you minimise the disadvantages of increasing the time needed for performing automated tests for multiple configurations.

Visual tests are an ideal candidate for performing automated tests on multiple browsers.

Summary

So, to answer the question asked in the introduction to this text, that is whether it is worth to automate tests – I believe it is. I encourage you to take this into account, because the advantages described in the text may have measurable benefits.